Solana

Solana

Network Throughput Limits

Context

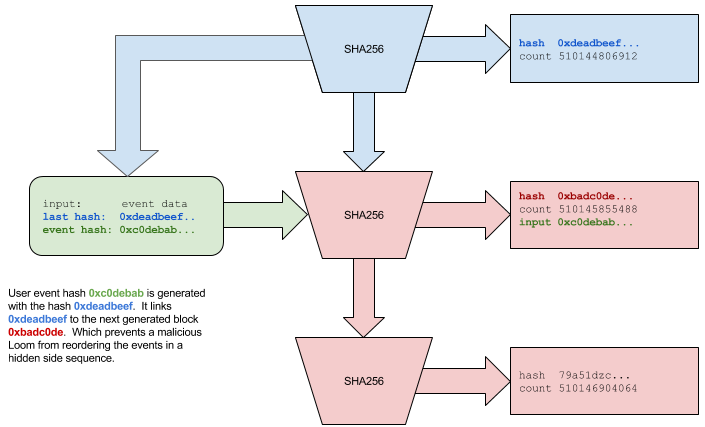

This figure appears in the 'System Architecture' section, in the discussion of the theoretical and practical throughput limits of a Solana PoH generator node. The section identifies bandwidth and processing constraints that bound the maximum transaction throughput achievable with current hardware, and analyzes how each pipeline stage contributes to or mitigates these limits.

What This Figure Shows

The diagram maps out the bandwidth and compute constraints at each stage of the TPU pipeline: the network ingress bandwidth at the Fetch stage limits how many transaction bytes can arrive per second; the GPU CUDA throughput at the SigVerify stage sets the signature verification rate ceiling; the CPU core count and Sealevel parallelism at the Banking stage determine the execution throughput for non-conflicting transaction sets; and the PoH hash rate on the leader's single core determines the maximum tick frequency that timestamps transaction batches. The diagram shows that with commodity 2020-era hardware (1Gbps network, modern GPU, multi-core CPU), the system's bottleneck is network bandwidth rather than CPU or GPU compute, implying that hardware improvements will continue to increase Solana's throughput headroom.

Significance

This throughput analysis grounds Solana's performance claims in concrete hardware specifications and makes the scaling roadmap explicit: as network bandwidth, GPU performance, and CPU parallelism improve with Moore's Law, the system's throughput scales proportionally without requiring protocol changes. The diagram also reveals which component is the current limiting factor, guiding where engineering investment yields the greatest throughput gains.